|

Moving on to row " & Count + 1 & "of " & LastRow & ". Moving on to row "Īpplication.StatusBar = "This row is a dead link.

You’ll need to understand the site structure to extract the information that’s relevant for you. That should be your first step for any web scraping project you want to tackle. Now at row "Īpplication.StatusBar = "Macro finshed running with " & BadCount & " errors." How to Build a Web Scraper With Python Step-by-Step Guide The guide will take you through understanding HTML web pages, building a web scraper using Python, and creating a DataFrame with pandas. Before you write any Python code, you need to get to know the website that you want to scrape. It helps you innovate faster because you can test and execute new ideas faster. " & Status & Count + 1 & "of " & LastRow & "." And it really puts the bar higher in terms of innovation by enabling easy access to web data to everyone, web scraping forces you to enhance your value proposition. Moving on to row "Īpplication.StatusBar = BadCount & " dead links so far. RCell.Value = ie.Document.getElementById("content").innerText " & Status & Count & "of " & LastRow & "." Set rRng = sht.Range("b4904:b" & LastRow)Īpplication.StatusBar = BadCount & " dead links so far. Set ie = CreateObject("internetexplorer.application")

Set sht = ThisWorkbook.Worksheets("Spells") You will see a large output, and after a couple of minutes, it will complete and you will have a CSV file sitting in your project folder. Also, if I set IE.Visibile to False, the problem gets worse, occurring more often.Ĭan anyone help me figure this out, so that the scraper is more consistent.? Here's my code: Private Sub Wait(ByVal nSec As Long) To make Scrapy start scraping and then output to a CSV file, enter the following into your command prompt: scrapy crawl oscars -o oscars.csv. Meaning, it goes to the link in row 10, but then pastes the content it scraped into row 11, skips the row 11 link, and then proceeds as normal from row 12 (these row numbers are just examples the error is both intermittent and continuous).

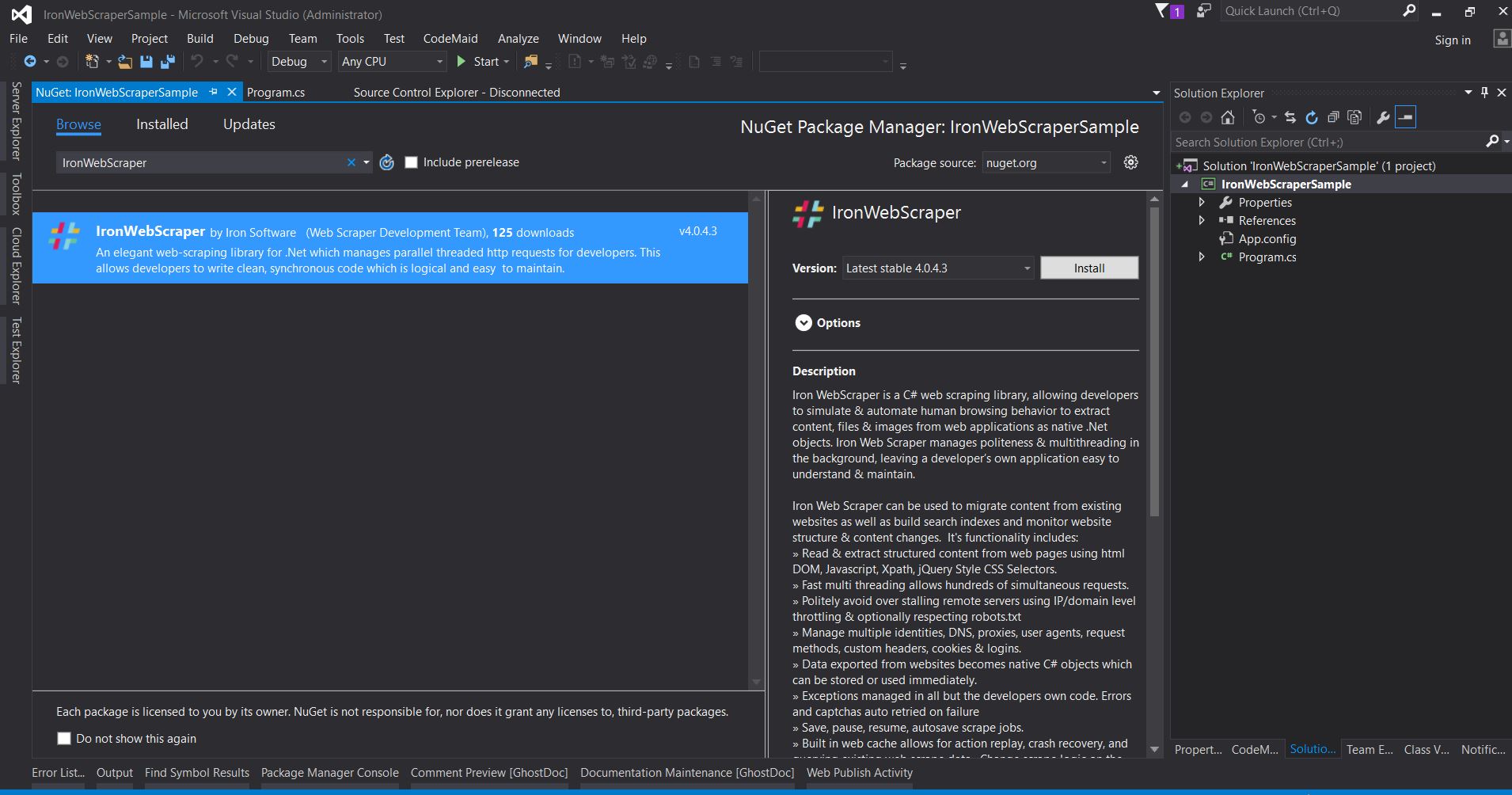

However, it will occasionally skip a row in my spreadsheet. So, I've built a webscraper (with LOTS of help) to pull info from a particular website. Web scraping can be difficult, particularly when most popular sites actively try to prevent developers from scraping their websites using a variety of techniques such as IP address detection, HTTP request header checking, CAPTCHAs, javascript checks, and more.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed